Why Google’s Information Gain & Trust Patents Make Most “200 Ranking Factors” Guides Obsolete

For over a decade, “200 ranking factors” lists have defined how SEOs think about Google: long checklists of on‑page, off‑page, and technical signals, carefully updated each year. Recent Google patents, however, describe a very different reality — systems that explicitly reward information gain, diversity, and behavior‑based trust, and that actively down‑weight redundant, copy‑paste content. In this article you’ll see what those patents actually say, why they make static factor lists conceptually obsolete, and how a consensus‑plus‑ignorance approach outperforms classical “SERP consensus SEO”. Read about the Ranking Factors 2026 and beyond based on the Ignorance Graph.

SEO Ranking Factors 2026 and beyond – Screenshot alsoasked.com

What is a ranking factor in SEO?

A ranking factor is any signal, attribute, or behavioral pattern that a search engine’s algorithm uses — alone or in combination with others — to assign a position to a document in a set of search results. The term is deceptively simple: in practice, ranking factors are not independent, static toggles. They are weighted, context-dependent inputs to a dynamic scoring model that is continuously updated by user behavior, query intent, and the competitive information landscape of the entire document corpus.

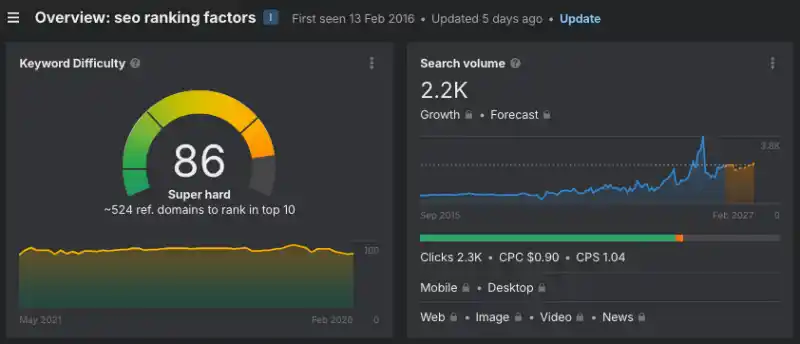

SEO Ranking Factors ahrefs.com

The most important distinction in 2026 is between candidate selection factors (which get a page into the eligible pool) and re-ranking factors (which determine final position within that pool). Most “200 ranking factors” lists conflate the two. Google’s own patents describe them as sequential, structurally different processes. Passing the first stage does not guarantee performance in the second.

| Factor Stage | What it controls | Primary signals |

|---|---|---|

| Candidate selection | Whether the page enters the result set at all | Crawlability, indexation, topical relevance, basic quality thresholds |

| First-pass ranking | Initial score within the candidate set | Content match, link authority, entity presence, structured data |

| Re-ranking (dynamic) | Final position after diversity and behavior adjustments | Information gain score, CTR, dwell time, pogo-sticking, result diversity |

How many SEO ranking factors are there?

The figure of “200+ ranking factors” originated with Brian Dean’s 2013 Backlinko compilation and has been reproduced in slightly updated form ever since. Google has never confirmed that number. What Google has confirmed — through patents, developer documentation, and public statements — is that ranking is the output of machine-learned models trained on thousands of signals, many of which are derived rather than directly set. The number “200” is a shorthand that obscures the real structure: a small number of high-weight signals (content quality, link authority, page experience, user behavior) operating on top of a large base of low-weight qualifying signals.

For practical SEO in 2026, the more useful question is not “how many factors are there?” but “which factors have threshold effects that gate entry, which have marginal returns at scale, and which create durable behavioral trust that compounds over time?” The Ignorance Graph methodology answers those questions structurally; the 200-factor checklist cannot.

The three stages of SEO ranking: Discovery, Relevance, and Authority

Google’s indexing and ranking pipeline can be mapped to three sequential gates:

Discovery determines whether Googlebot finds, crawls, and indexes a page at all. Technical SEO signals — crawl budget, internal link structure, robots.txt and noindex directives, page speed as it affects crawl efficiency, mobile rendering, and Core Web Vitals — all operate primarily at this gate. A page that fails discovery never enters any ranking competition.

Relevance determines whether the indexed page is topically matched to the user query. Content signals — keyword presence, semantic entity coverage, heading structure, schema markup, E-E-A-T signals, and topical authority of the domain — determine whether the page enters the candidate set for a given query. A page can be crawled but rank for nothing if its relevance signals are weak.

Authority determines position within the candidate set. Link signals (domain authority, link quality weighted by the Reasonable Surfer model), behavioral trust (CTR, dwell time, return-visit patterns), and the dynamic re-ranking systems based on information gain and result diversity all operate at this stage.

The most important SEO ranking factors in 2026

Drawing on confirmed Google documentation, verified patent filings (including US8117209B1, US10152520B1, and related information gain patents), and behavioral evidence from large-scale SERP analysis, the following factors carry the highest weight in 2026:

1. Content quality and information gain

Content quality in 2026 is not measured by word count, keyword density, or the presence of subheadings. Google’s information gain patents describe a system that compares a candidate page against a reference set of already-seen documents and scores the page by its marginal contribution — how much new, useful information it adds that the reference set did not already contain. Pages that are semantically redundant with the existing top results can be systematically down-weighted in the second-stage re-ranking process, regardless of how well-optimized they are by traditional checklists.

The practical implication: content that introduces novel taxonomies, unresolved questions, cross-context comparisons, or expert framing not present in the consensus cluster scores higher on information gain than content that restates the same core facts more verbosely. This is the theoretical foundation of the Ignorance Graph approach.

2. Backlinks: quality weighted by behavioral probability (US8117209B1)

Backlinks remain a dominant ranking signal, but their mechanism has evolved significantly since the original PageRank formulation. Google patent US8117209B1 (Dean, Anderson, Battle; priority 2004, published 2012) formalizes the Reasonable Surfer model: a dynamic link-weighting system that assigns a probability to each link based on features of the anchor text, the link’s position in the source document, the topical alignment between source and target, and — critically — actual user click behavior on those links.

Under this model, a link from a page where users regularly click through to your content carries more weight than a link from a high-authority page where users ignore all outbound links. The model builds both general rules (links with prominent, topically-aligned anchor text have higher selection probability) and document-specific rules (certain site-specific link positions are learned to have high or low click probability). The weights are recalculated periodically as user behavior data changes, making link authority a genuinely dynamic signal rather than a static accumulation.

| Link Feature | Effect on Reasonable Surfer Weight |

|---|---|

| Anchor text topically aligned with source and target | Higher weight — greater probability of selection |

| Link positioned in main body content near the top | Higher weight vs. footer or sidebar placement |

| Source document with high user engagement | Higher propagated trust through behavior-validated links |

| Commercial or unrelated anchor text on an editorial page | Lower weight — recognized as low-selection-probability link type |

| Links from parked domains or low-engagement pages | Significantly lower weight — document-specific negative rules |

3. E-E-A-T: Experience, Expertise, Authoritativeness, Trustworthiness

Google’s Search Quality Evaluator Guidelines define E-E-A-T as the framework human quality raters use to assess content. While E-E-A-T is not directly a ranking signal (raters do not change rankings), it correlates strongly with the signals that are: author entity recognition in the Knowledge Graph, on-site credential signals (author pages, bylines, structured data), domain-level reputation signals from third-party sources, and content that demonstrates first-hand experience with the topic rather than secondary aggregation. For YMYL (Your Money or Your Life) topics — health, finance, legal, safety — the E-E-A-T bar is substantially higher and Google has confirmed that quality algorithmic updates specifically target these verticals.

4. Core Web Vitals and page experience

Core Web Vitals — Largest Contentful Paint (LCP), Interaction to Next Paint (INP, which replaced First Input Delay in 2024), and Cumulative Layout Shift (CLS) — are confirmed Google ranking signals as part of the page experience update. They operate primarily as a tiebreaker at the margin for pages already competing closely on content and authority signals. LCP under 2.5 seconds, INP under 200 milliseconds, and CLS under 0.1 are the “good” thresholds.

Mobile-first indexing means Google’s crawler uses the mobile version of a page as the primary indexed version. A page that passes Core Web Vitals on desktop but fails on mobile is assessed on its mobile performance.

5. Technical SEO: the qualifying floor

Technical signals do not directly boost rankings above competitors who already meet the technical bar. They are qualifying conditions — their absence prevents ranking, but their presence does not guarantee it. Key technical factors include: HTTPS, canonical tag correctness, sitemap submission and crawl rate management, structured data (Schema.org markup for articles, FAQs, products, breadcrumbs), correct hreflang implementation for multilingual sites, and page speed as it affects both crawl efficiency and user experience signals.

6. Search intent alignment

Google’s systems classify queries into intent types (informational, navigational, commercial, transactional) and match them to document types that historically satisfy that intent. A blog post competing for a transactional query is structurally mismatched regardless of its content quality. Matching the dominant SERP format — whether that is a listicle, a how-to guide, a comparison table, a single answer, or a tool — is a prerequisite for entering the candidate set at scale.

7. Local SEO ranking factors

For queries with local intent, Google uses a separate set of proximity, relevance, and prominence factors to populate the Local Pack and local organic results. The primary local ranking factors are Google Business Profile completeness and activity, NAP (Name, Address, Phone) consistency across the web, proximity to the searcher, local citations in relevant directories, reviews and ratings (quantity, velocity, and sentiment), local link authority from regionally relevant sources, and behavioral signals from Google Maps (clicks, direction requests, calls). These local factors are distinct from organic blue-link ranking signals and must be managed separately.

1. The pre‑patent worldview: “200 factors” as frozen consensus

Most popular “200 ranking factors” guides follow the same pattern: they aggregate earlier lists (Backlinko, Moz, cmlabs, countless blogs), assign informal weights, and present them as an almost complete map of Google’s algorithm. This approach assumes that ranking is a static function of many relatively independent signals, and that the job of SEO is to “tick as many boxes as possible” on that function. What it largely ignores is any dynamic notion of novelty, redundancy, or user‑behavior feedback beyond broad statements like “improve UX” and “reduce pogo sticking”.

| Aspect | Traditional 200‑Factor Guides | What Google Patents Emphasize |

|---|---|---|

| View of ranking | Static mix of many fixed factors, summed or weighted. | Dynamic systems that re‑rank based on information gain, diversity, and behavior. |

| Novelty vs. redundancy | Almost never modeled explicitly; repetition of consensus is expected. | Patents define scores for “information richness” and penalize redundancy. |

| User behavior | Generic advice about CTR and dwell time. | Detailed models of behavior‑driven trust and ranking adjustments. |

2. Information Gain: Google is scoring your delta, not your repetition

In several patents and related discussions, Google formalizes an Information Gain score: a way to measure how much new information a document contributes beyond what is already available to the user. These systems compare candidate pages with a reference set of documents and explicitly favor content that reduces redundancy and increases information richness, especially for follow‑up information needs in multi‑step or conversational searches.

| Claim | Evidence from Patents / Analyses |

|---|---|

| Google measures “how much new information” you add. | Information Gain patents describe scoring documents by their contribution beyond previously viewed content. |

| Redundant pages can be de‑prioritized even if “well optimized”. | Diversity and information‑richness patents detail similarity penalties and mechanisms to avoid near‑duplicate results dominating the SERP. |

| Follow‑up needs are a separate optimization target. | Information Gain patents explicitly tie scoring to “follow‑up information needs” — the next thing users want after reading consensus pages. |

This has a brutal consequence for “200 factors” articles: if your guide is just a more verbose remix of the same items everyone else lists, your net Information Gain score tends toward zero. You are optimizing for inclusion in the candidate set, not for promotion in the second‑stage re‑ranking that selects diverse, high‑gain results.

| Content Type | Information Gain Signal | Likely Treatment |

|---|---|---|

| Copy of existing 200‑factor list | High overlap, minimal new concepts. | Recognized as on‑topic but down‑weighted vs. richer, more original documents. |

| Guide that maps consensus + introduces new factor taxonomies, cross‑engine comparisons, and patent‑driven behavior | Lower redundancy, higher semantic delta. | Better Information Gain score, more likely to appear for follow‑up and assistant‑style queries. |

3. The Reasonable Surfer patent (US8117209B1): how link trust actually propagates

Google patent US8117209B1 is the formal specification of the Reasonable Surfer model. It replaces the original PageRank assumption — that a random surfer follows any link with equal probability — with a behavior-learned model where the probability of following each link is predicted from feature vectors built from the link’s anchor text, position, topical relevance, and actual click data from users who encountered that link.

The patent describes two levels of learned rules. General rules apply across all documents: links with topically aligned anchor text, prominent positions, and large font sizes have systematically higher selection probability. Document-specific rules apply to individual sites: the model learns, for example, that links under a particular structural position on a given domain have high click-through rates, while links in footers or those that contain “domainpark” in the target URL have low rates. These weights are periodically updated as behavior data evolves.

For the Ignorance Graph methodology and 2026 ranking strategy, this patent has three direct implications:

First, link quality is behavioral, not static. A link from a highly-engaged document where users regularly click onward to your content carries more weight than a link from a nominally high-authority page that users skim and leave. Building content that becomes a genuine reference node in a topical cluster — where editors and journalists actually link to it and readers follow those links — creates exponentially more value than accumulating passive links from low-engagement contexts.

Second, topical cluster coherence is mechanically rewarded. The patent explicitly scores higher link weights when the source document’s topic cluster matches the anchor text’s topic cluster, which in turn matches the target document’s cluster. This means the Ignorance Graph strategy of occupying a semantically adjacent but underexplored territory, while remaining within the topical radius of established authority clusters, directly maximizes the weight of inbound links from those clusters.

Third, the model is dynamic. Static link counts from tools like Ahrefs or Moz do not capture behavioral link weights. A domain that earns links from high-engagement sources in a specific topic cluster can outrank a domain with more total links but lower behavioral weighting. This is why smaller specialist sites can outrank large generalist domains in niche areas: their link graph, while smaller, may be entirely within high-behavioral-weight topical nodes.

4. Diversity rankers: why being “different” is now a ranking asset

A separate line of patents focuses on diverse search results: taking an initial set of relevant documents and re‑ranking them to maximize diversity across subtopics, sources, or feature dimensions. These systems explicitly reward results that cover alternative angles or facets, even if those documents are slightly less similar to the query than the top consensus answer.

| Claim | Patent Logic |

|---|---|

| Search aims to avoid “SERP monoculture”. | Diversity patents specify that top results should not be near‑identical, but should span diverse aspects of the information space. |

| Documents can rank because they differ, not despite it. | Re‑ranking algorithms introduce diversity‑driven boosts, promoting some results precisely because they add variety. |

| Over‑optimized consensus clones become substitutable. | If ten pages cover the same factors in the same way, any single one is replaceable; diversity rankers make room for non‑clones with new angles. |

For “SEO ranking factors” this means: a document that consciously deviates from the consensus template — by introducing new conceptual structures, by critiquing the 200‑factor myth, or by mapping information gaps — can be algorithmically favored as a diversity contribution.

| Approach | Effect under Diversity Rankers |

|---|---|

| Pure consensus replication (same headings, same factors) | Competes in a crowded similarity cluster; limited diversity bonus. |

| Consensus + explicit “ignorance” and pre‑consensus factors | Forms a distinct sub‑cluster; more likely to be selected as a diverse result. |

5. Trust & user behavior: when checklists lose against click patterns

Google has also patented models that rank documents based on user behavior and derived trust, not just static content features. These approaches incorporate click‑through rates, dwell time, pogo‑sticking, host‑level loyalty, and query‑chain behavior into a “quality” or “trust” estimation that can boost or demote results over time.

| Behavior Signal | Patented Use |

|---|---|

| Frequent clicks on a result when shown | Indicates higher perceived relevance; can be used to adjust rankings upward. |

| Fast returns to SERP and choosing another result | Signals dissatisfaction; can lead to down‑weighting of the initial page. |

| Repeated use of a domain for a topic | Builds host‑level trust, which patents suggest can propagate through links and recommendations. |

This introduces a second level of obsolescence for “200 factors” guides: even if they perfectly implement all checklist elements, they often fail to create behaviorally distinctive experiences. Users see the same list again, skim for one or two items, and bounce — generating mediocre behavior signals compared to a page that genuinely changes their understanding of ranking.

| Page Type | Typical User Reaction | Behavioral Impact |

|---|---|---|

| Standard 200‑factor list | “I’ve seen this before”, quick scan, return to SERP. | Weak trust signals, little reason for long‑term boost. |

| Patents‑driven, information‑gain‑rich guide | “Wait, this changes the model”, deeper read, shares, revisits. | Strong trust patterns, better host‑level reputation, positive feedback loop. |

6. Ranking factors beyond Google: Bing, Perplexity, and LLM citation signals

For SEO in 2026, “ranking factors” no longer means only Google. A growing share of search traffic and content discovery passes through Bing (which powers Copilot, DuckDuckGo, and a significant share of AI-assisted searches), Perplexity, and direct queries to ChatGPT, Claude, and Gemini. Each of these systems uses a different signal architecture.

| Platform | Key differentiating factors vs. Google | What this means for content |

|---|---|---|

| Bing / Copilot | Stronger weight on social signals (LinkedIn, X/Twitter engagement), direct OpenAI integration, PageRank variant with different link freshness decay | Social distribution matters more; fresher links weighted higher |

| Perplexity | Source citability, factual precision, structured claim + evidence format, recency | Content must be citable at the sentence level; vague claims are bypassed |

| LLM citation (ChatGPT, Claude, Gemini) | Training data inclusion, structured factual density, attribution-friendly formatting, Llms.txt/robots.txt AI permissions | Sources cited in LLM outputs tend to be ones with clean, precise, quotable claims and high inbound link authority from academic or specialist sources |

| Google AI Overviews | E-E-A-T weight amplified, direct answer proximity, FAQ schema, structured data, featured snippet eligibility | Content selected for AI Overviews tends to have clear, attributable claims, structured markup, and high behavioral trust scores |

The practical ignorance territory here is large: virtually no “200 ranking factors” guide addresses LLM citation signals, Perplexity ranking, or AI Overview selection criteria as distinct factor sets. These are genuinely pre-consensus topics where content has almost zero competition and high future-facing information gain.

7. A new taxonomy of ranking factors by knowledge state

The most significant structural problem with existing ranking factor guides is that they mix confirmed signals, inferred correlations, anecdotes, and disproven myths into a single undifferentiated list. The Ignorance Graph methodology requires labeling knowledge state explicitly. Here is a working taxonomy for 2026:

| Knowledge State | Definition | Examples for ranking factors |

|---|---|---|

| Confirmed | Directly stated by Google in official documentation, patents, or verified public statements | Core Web Vitals (page experience ranking signal); HTTPS; Mobile-first indexing; Content quality (Helpful Content system); Links (PageRank-derived authority) |

| Patent-inferred | Described in granted patents but not publicly confirmed as actively deployed | Reasonable Surfer behavioral link weighting (US8117209B1); Information Gain scoring; Query-independent quality scores derived from usage data |

| Correlation-based | Observed statistical relationships in large-scale SERP studies; not causal | Word count and rankings (correlated, not causal); Number of images; Exact keyword match in title |

| Speculative / pre-consensus | Plausible based on system architecture and partial evidence but not confirmed | LLM citation weighting as a future link signal; behavioral trust as a factor in AI Overview selection; Cross-engine consistency of entity representation as a trust signal |

| Myth / disproven | Widely repeated in guides but contradicted by Google statements or controlled experiments | Bounce rate as a direct ranking signal (Google has confirmed analytics data is not used); Domain age as a quality signal; Meta keywords tag |

8. The 4 Common SEO mistakes that no “ranking factors” list prevents

The canonical SEO mistakes of 2026 are not technical omissions — most teams cover the basics. They are strategic misalignments that arise precisely from over-reliance on factor checklists:

1. Consensus cloning at scale. Publishing content that is a more detailed version of what already ranks, without any meaningful information gain, feeds the exact system that will down-rank you in the second-stage re-ranker. The checklist tells you to make “comprehensive” content; the patent system tells you to make content with a positive marginal contribution to the information landscape.

2. Ignoring follow-up intent. Information Gain patents are explicitly tied to follow-up information needs. Most content strategies optimize for the primary query and ignore the query chain. A user who reads a “what are SEO ranking factors” page next searches for “how do I improve my E-E-A-T” or “does bounce rate affect ranking” or “how does Reasonable Surfer work”. Content that anticipates and answers those follow-up queries, rather than restating the primary answer, has a structural advantage in the re-ranking stage.

3. Treating link building as volume accumulation. Under the Reasonable Surfer model, 10 links from high-behavioral-engagement sources in your topic cluster outweigh 100 links from low-engagement, topically misaligned sources. Link building strategy that ignores the engagement characteristics of the linking page is optimizing for a model that has not described Google’s system since at least 2012.

4. Neglecting behavioral trust design. If a page’s interaction design generates high pogo-sticking — because the user found nothing new, skimmed the familiar list, and returned to the SERP — it is training Google’s model to down-weight it. Behavioral trust is a compounding signal: early positive behavior patterns generate ranking boosts that increase impressions, which generate more behavioral data, which either reinforce or degrade the initial boost.

9. Pure SERP‑Consensus SEO vs. SERP + Ignorance Graph

Seen through this patent lens, traditional “SERP‑consensus SEO” — creating content that matches what already ranks as closely as possible — is only half a strategy. The missing half is a systematic method to identify and exploit the ignorance surface: those subtopics, contrasts, and future‑facing signals that consensus guides systematically omit.

| Dimension | Pure SERP‑Consensus SEO | SERP + Ignorance Graph SEO |

|---|---|---|

| Goal | Match existing top pages as closely as possible. | Match core expectations, then maximize useful deviation. |

| Content mix | ≈ 90–100% consensus information, minor cosmetic updates. | ≈ 60–70% consensus to anchor intent, 20–30% high‑gain ignorance content, 5–10% meta‑explanation. |

| Information Gain | Low to zero; often treated as redundant. | Explicitly optimized; new taxonomies, cross‑engine matrices, pre‑consensus factors. |

| Diversity ranker treatment | One of many near‑identical documents; limited diversity boost. | Distinct angle; more likely to be selected to enrich SERP variety. |

| User behavior / trust | Short visits, low “wow” factor, weak brand queries. | Higher engagement, more shares and branded recall; better trust signals. |

| AI Overview / LLM citability | Low — generic claims already present in training data. | Higher — novel claims, precise attribution, structured knowledge increase citability. |

10. A patent‑aligned blueprint for “SEO ranking factors” content

What does a patent‑aligned, future‑proof “SEO ranking factors” pillar look like? At minimum, it should be designed as a consensus floor with an ignorance ceiling: a page that is clearly recognized as part of the same topical cluster, but that systematically moves beyond what the cluster already knows.

| Layer | Purpose | Minimum threshold |

|---|---|---|

| Consensus floor (~60–70%) | Enter the candidate set by covering the shared core of the topic. | Must directly answer all high-volume PAA queries (what is a ranking factor, how many are there, what are the most important ones, the three stages, local SEO factors) before the information-gain layer activates. |

| Ignorance / information‑gain layer (~20–30%) | Maximize delta vs. existing guides while staying useful. | Must introduce at least one structural element absent from all current top-10 results: a knowledge-state taxonomy, a cross-engine comparison, a patent analysis, or a pre-consensus speculative layer with labeled epistemic status. |

| Meta‑layer (~5–10%) | Explain to readers why this model is needed and how patents support it. | Walk through information‑gain, diversity, and trust patents, showing how they invalidate checklist‑only thinking. This section is itself a diversity signal — no other ranking factors guide contains it. |

In that sense, “200 ranking factors” guides are not just incomplete; they lock you into an optimization regime that is misaligned with how modern ranking systems are legally and technically described to work. The more you chase consensus symmetry, the less you contribute to information gain and diversity — and the more you depend on external authority signals like links to compensate.

Scientific‑Technical Foundations

Information Gain and Novelty

Google’s Information Gain patent describes how documents are scored for newness relative to already-seen content, and how this scoring influences which pages are surfaced for follow‑up queries and featured results. Older patents on “information richness” and diversity describe mechanisms to avoid SERP redundancy by re‑ranking near‑duplicate documents downward and promoting diverse, information‑rich alternatives.

The Reasonable Surfer Model and Behavioral Link Weighting

Google patent US8117209B1 (Ranking documents based on user behavior and/or feature data, Jeffrey Dean et al., granted 2012) formalizes a system that models the probability a user will follow each link on a page, based on feature vectors from the link’s properties and historical click behavior. Document ranks are assigned as a weighted function of the ranks of linking documents, where the weights reflect these behavioral probabilities rather than raw link counts. The model is dynamic: weights are updated as behavior data changes. This patent is the foundational mechanical basis for the claim that link quality in modern Google is behaviorally determined, not structurally counted — and that the Ignorance Graph’s goal of becoming a genuine reference node in a topical cluster has direct, quantifiable effects on link weight propagation.

User Behavior and Trust

Patents on ranking based on user behavior and trust outline models in which click‑through rates, dwell time, pogo‑sticking, and long‑term interaction with particular hosts are used as signals to estimate perceived quality and trustworthiness. These behavior‑driven scores can be combined with content‑based features to adjust rankings over time, giving an advantage to sites that repeatedly satisfy users even when their raw link metrics lag behind incumbents.

Static Factor Lists vs. Dynamic Ranking Systems

Analyses of contemporary ranking factors note that public “200 factors” compilations mainly aggregate heuristic, correlation‑based, or anecdotal signals, while patent and documentation evidence increasingly emphasizes dynamic feedback loops, semantic understanding, and user‑centric optimization criteria. This divergence explains why purely checklist‑driven SEO often underperforms in an ecosystem that is legally and technically designed to prefer information‑rich, behaviorally trusted, and diversity‑contributing content. The 80/20 rule in SEO reflects this reality: roughly 20% of signals — content quality, behavioral trust, and topically-weighted link authority — account for 80% of ranking outcomes, while the remaining checklist items serve primarily as qualifying conditions rather than competitive differentiators.

The AI Agency Myth – Why you should run fast if someone tells you to replace your Advertising agency by AI.